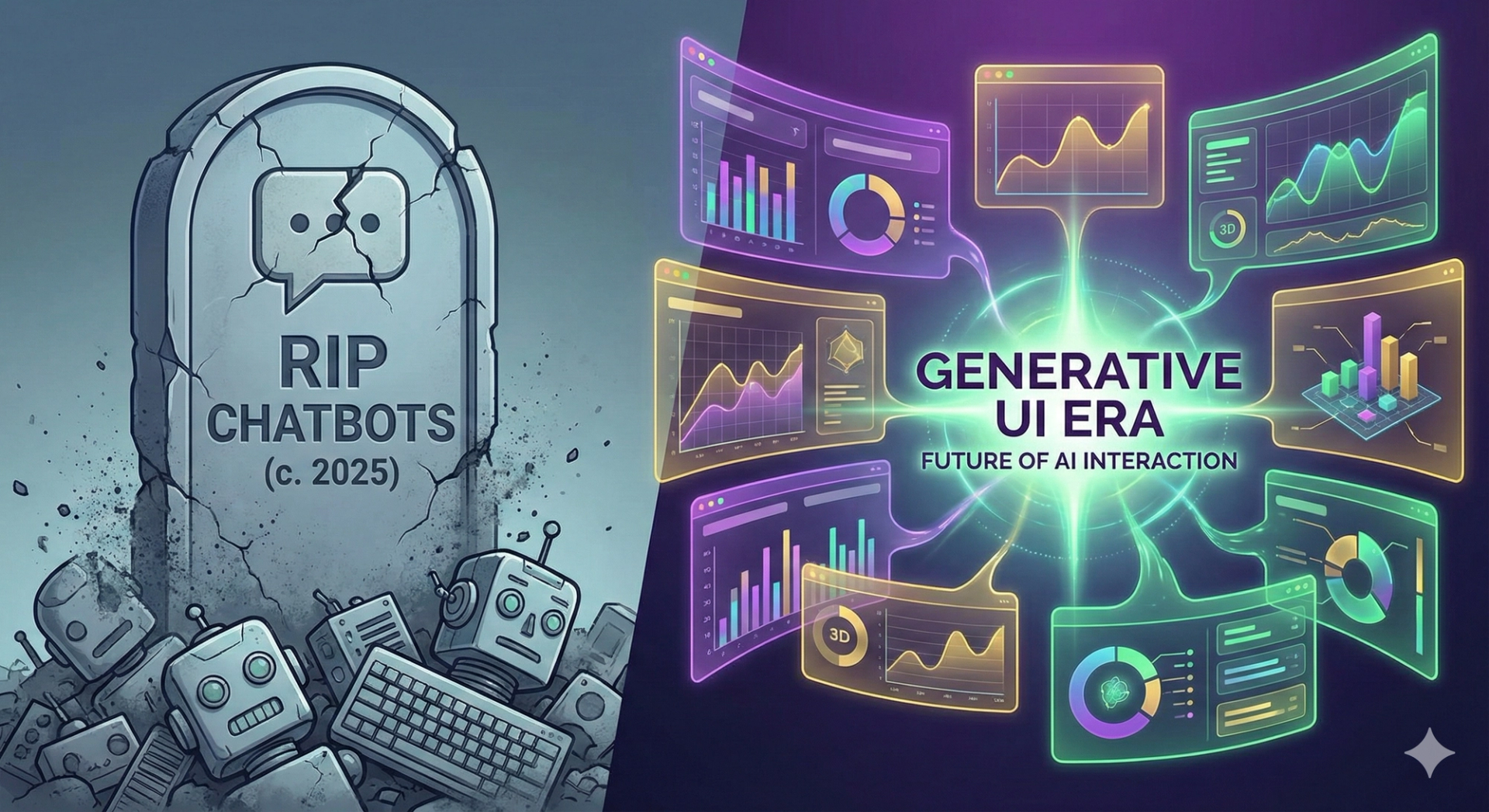

We are stuck in the 'Lazy Era' of AI design. Chat interfaces are boring, inefficient, and bad for complex tasks.

We are currently living through the "Lazy Era" of AI product design.

Since ChatGPT launched in late 2022, almost every founder, PM, and designer has adopted the same lazy pattern for their AI integration: The Chatbot Sidepanel.

You know the drill. You have your main application on the left, and a little chat window on the right where you type messages to an AI. It feels tacked on. It feels like a separate app. And frankly, it's boring.

For 90% of business use cases—analytics, finance, project management—Chat is a terrible interface.

Why? Because chatting is slow. Reading paragraphs of text to find a single data point is inefficient. As humans, we process visual information (charts, tables, dashboards) 60,000 times faster than text.

We need to move beyond the chatbot. We need Generative UI (GenUI).

Generative UI is a paradigm shift where the AI doesn't just generate text; it generates the interface itself in real-time, tailored to the user's immediate need.

It is the difference between asking for directions and getting a paragraph of text versus getting an interactive Google Map.

User: "Show me my sales for Q4 2025." AI Bot: "Sure. Your sales in Q4 were $150,000. October was $40k, November was $50k, and December was $60k. That is a 20% increase over Q3."

Problem: The user has to read and parse the data mentally.

User: "Show me my sales for Q4 2025." AI Agent: [Instantly renders an interactive React Bar Chart component directly in the feed, with hover-over tooltips for each month and a 'Download CSV' button.]

Solution: The interface is the answer. The friction is zero.

Building a Chatbot is easy. Building GenUI is hard. It requires a fundamental rethink of your frontend architecture.

At CodeStreaks, we have moved away from building static Next.js pages for our clients. We are now building "Dynamic Component Systems."

We use tools like the Vercel AI SDK 3.0 which supports Generative UI via something called render().

Instead of the LLM just streaming text, it streams structured data that our frontend interprets. The LLM decides: "This answer requires a chart, not text." It then calls a predefined UI component—let's say —and streams the props to it. The user sees the chart materialize instantly.

<SalesChart data={...} />We aren't just theorizing; we are deploying this for clients today.

Imagine a CRM where there are no pre-set dashboards. You ask, "Who are my riskiest leads right now?" The AI generates a custom table with columns for "Last Contact," "Sentiment Score," and a "Draft Email" button. It built a mini-app just for that question.

A CFO asks, "Why did our server costs spike yesterday?" Instead of a text explanation, the AI renders a line graph of AWS usage overlayed with a timeline of recent code deployments, allowing the CFO to visually correlate the two events.

When you use our SEO tool Airwrite.pro, notice that it doesn't just spit out a blog post.

If you ask it to "Fix the SEO title," it renders a specific, interactive UI component with a character counter and a SERP preview. The AI is choosing the right UI tool for the job.

In 2026, SaaS products will be judged by their "Time-to-Insight."

You win.

If you are building a B2B SaaS today, stop building chatbots. Start building interfaces that think.

Is your UI stuck in 2023? We help founders migrate from static dashboards to Generative Interfaces. Book a Technical Consultation with CodeStreaks.